Charles Stross writes about a problem near and dear to my heart — data preservation.

Stross starts off by mentioning this Observer article by British Library head Lynne Brindley, but Brindley annoys with material like this,

The 2000 Sydney Olympics was the first truly online games with more 150 websites, but these sites disappeared overnight at the end of the games and the only record is held by the National Library of Australia.

. . .

People often assume that commercial organisations such as Google are collecting and archiving this kind of material – they are not. The task of capturing our online intellectual heritage and preserving it for the long term falls, quite rightly, to the same libraries and archives that have over centuries systematically collected books, periodicals, newspapers and recordings and which remain available in perpetuity, thanks to these institutions.

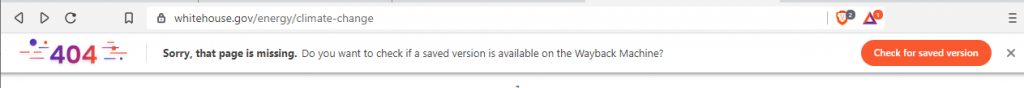

Not once does she mention Archive.org, the nonprofit driven effort to archive the web which has been doing for years what librarians like Brindley are finally getting serious about doing. And, yes, Archive.org has a copy of the Sydney Olympic websites.

But, of course, replication is just part of the problem and probably the easiest to solve these days. The cost of building large scale data storage is getting cheaper all the time. Yes, there’s more data being produced, but the ability to store and use that data is scaling well.

Ensuring the data will still be useful 10 years from now is another matter entirely. Yes, the web is mostly HTML and JPEGs, but there is plenty of nonstandard data from Word and PowerPoint files to Javascript and Flash files created using very different versions of software and often requiring specific versions of programs to all work properly together.

Stross looks at that problem from a personal level, noting the times he’s switched from one platform or software to another and the inherent difficulty in taking your data with you during that transition,

In the space of six years, I went through five word processing packages. Being naive at the time I didn’t export my files into ASCII when I moved from CP/M and LocoScript to MS-DOS. I learned better, and when I switched from Sprint to Word I halfway ASCII-fied those files; they’re a bit weird, but if I really wanted to I could get into them with Perl and mangle them into something editable. Along the way, I lost the 3″ floppies from the PCW. Then I had a hard disk die on me — in those days, the MTBF of hard drives was around 10,000 hours — and it took the only copy of most of the early work with it.

Score to 1993: two years’ work is 90% lost. And a subsequent five years’ work is accessible, kinda-sorta, if I want to strip out all the formatting codes and revert to raw ASCII.

Been there, though not quite to the extent as Stross — I made sure to have printouts of everything on the proprietary word processors I used back in the 1980s, though having PDF scans of everything is significantly less useful than just having plain ASCII files.

Having been burned by data loss, Stross describes how he tries to prevent that from happening again,

As a matter of personal policy, for those activities that involve creating data, I aim to use only software that is (a) cross-platform, (b) uses open or well-published file formats, and (c) ideally is free software.

This is in some ways a handicap; Thunderbird (my mail client of choice) and OpenOffice aren’t as colourful and feature-rich as, say, Apple’s Mail.app or Microsoft’s latest Word. However …

Firstly, they run on Macs, Linux systems, Windows PCs, and even on some other minority platforms. This protects my data from being held to ransom by an operating system vendor.

Secondly, they use open file formats. Thunderbird stores mailboxes internally in mbox format, with a secondary file to provide metadata. (This means I can claw back my email if I ever decide to abandon the platform.) OpenOffice uses OASIS, an ISO standard for word processing files (XML, style sheet, and other sub-files stored within a zip archive, if you need to go digging inside one). I can rip my raw and bleeding text right out of an OASIS file using command line tools if I need to. (Or simply tell OpenOffice to export it into RTF.)

Thirdly, they’re both open source projects and thus the developers have no incentive to lock me in so that they can charge me rent. I don’t mind paying for software; where an essential piece of free software has a tipjar on the developer’s website, I will on occasion use it. And I’m writing this screed on a Mac, running OS/X; itself a proprietary platform. But the software I use for my work is open — because these projects are technology driven rather than marketing driven, so they’ve got no motivation to lock me in and no reason to force me onto a compulsory (and expensive) upgrade treadmill.

I’ll make exceptions to this personal policy if no tool exists for the job that meets my criteria — but given a choice between a second-rate tool that doesn’t try to steal my data and blackmail me into paying rent and a first-rate tool that locks me in, I’ll take the honest one every time. And I’ll make a big exception to it for activities that don’t involve acts of creation on my part. I see no reason not to use proprietary games consoles, or ebook readers that display files in a non-extractable format (as opposed to DRM, which is just plain evil all of the time). But if I created a work I damn well own it, and I’ll go back to using a manual typewriter if necessary, rather than let a large corporation pry it from my possession and charge me rent for access to it.

All very good ideas. For text, I do everything in a text editor. If I need to pretty it up I’ll then import it into Open Office or something, but there’s something especially useful about plain old ASCII.

And for those situations where a closed-source, proprietary file format solution is the only possibility, make damn sure you have a relatively open alternative representation of your work. I use a modified CAD program, for example, and I have no idea whether or not the file format is closed or open, but I damn well make sure I have JPEG and PDF versions of every project I’ve done in case the company goes belly up and 10 years from now I don’t have anything to run the original files through.